Unless you've spent the last 40 years in a cave, you know what the internet is. As with most modern technology, however, you might not have a firm concept of the underlying principles.

Even more confusing, the way the internet works evolves. The latest generation, Web 3.0, is shaping up to be markedly different from the Web 2.0 technology that came before it.

Are these distinctions mere technicalities? Will they make noticeable a difference in your everyday connected experience – or attempts to publish engaging digital content? Here's what to know.

The Evolution of Web 1.0 to Web 2.0

The technology that powers the web has changed dramatically since it first hit the scene, and so have the ways we communicate online:

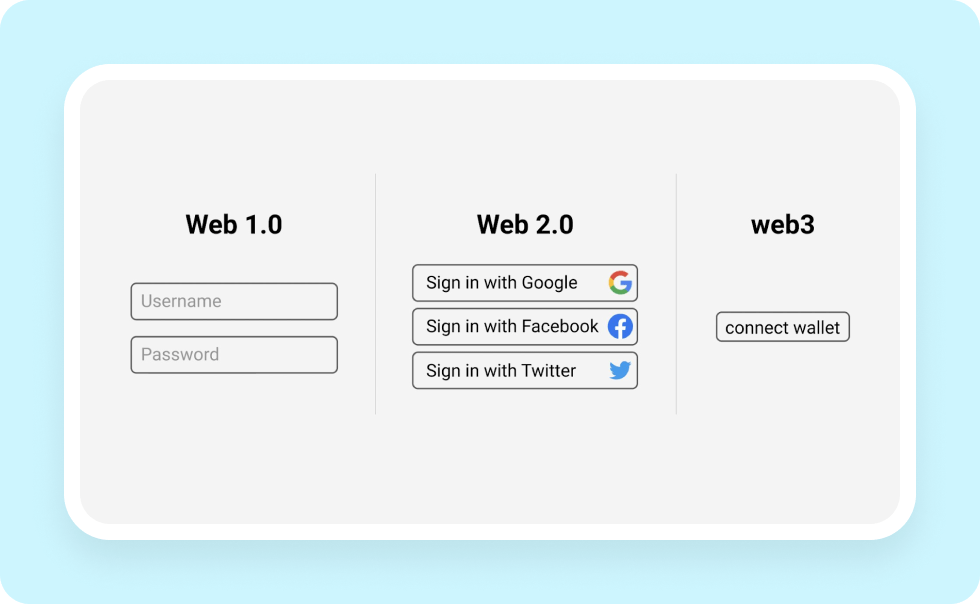

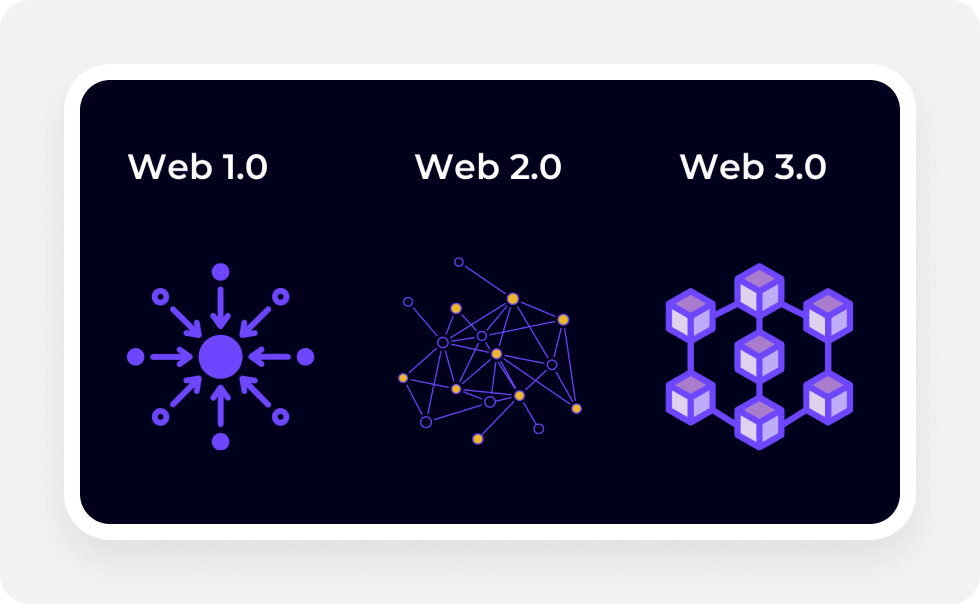

- Web 1.0 was the first generation of the World Wide Web. It got its start in the early 1990s as a static, text-based experience that was anything but interactive. Think internal corporate information pages, text-only bulletin boards, and simplistic messaging systems.

- Web 2.0, the second generation of the World Wide Web, hit the scene around the early 2000s. It's a dynamic, interactive web that lets users communicate and collaborate via blogs, social sites, and reusable functional elements, such as comment sections and chat systems.

- Web 3.0, the third generation of the World Wide Web, is still in development. Although it remains to be seen how it will pan out, early indicators suggest it'll incorporate immersive, augmented reality, and virtual reality experiences that will allow users to interact with each other and with computer-generated objects in a more realistic way. Most importantly, it'll be far more decentralized than other web generations.

It's important to remember that web generations aren't really formal standards. Although they build on official specifications and common best practices, each version leaves it up to the content creators to decide how things work. As these users, companies, and trade bodies develop new ways to share information, new tech gradually seeps onto the consumer web, changing the collective experience for all.

Transitioning to Web 2.0 in the Early 2000s

Web 1.0 was a practical affair. In those days, computers were just emerging as consumer-oriented devices, and most were extremely expensive or difficult to use without programming knowledge. In other words, if you didn't have corporate backing or a professional goal, you'd probably have no reason to mess with a computer – let alone try to get online.

The stage was set for Web 1.0. The earliest version of the Internet took the form of company-oriented pages, academic sites, and official government information portals – not exactly the most exciting content to browse.

These sites were the sheer definition of bare-bones. Images and media were practically nonexistent in that era of slow data connections, and page designers only had access to a minimal subset of the HTML tags and features we know and love in modern times. The vast majority of the technological developments that powered the web at this time occurred behind closed doors at companies like Microsoft, Oracle, Apple, IBM, and others.

If you're too young to have done so yourself, then viewing Web 1.0 pages might be a surprisingly underwhelming experience. For one thing, there were no ads, and the majority of the site design elements you'd encounter would take the form of simple tables and frames that contained plain old text. Another big difference is that most ISPs charged you based on how many pages you viewed.

This system could only last for so long – It didn't lend itself to open information sharing, and interactivity was an afterthought. As more people took an interest in getting online, something had to change, and updating the technology turned out to be a good solution.

Web 3.0 As the possible Future of the Web?

Web 3.0 is the next generation of the web, and it's making major ripples even though it remains in the concept stage. Envisioned as a decentralized system that builds on blockchains and similar technologies, it's predicted to result in improvements to data security, privacy, and user control.

Web 3.0 technologies and standards are still being developed by the W3C, but the idea has been around for some time. Sir Tim Berners Lee, the inventor of the first web browser and W3C director, came up with the idea around 1999. He proposed a Semantic Web - or a web where machines could understand the meaning behind the data they processed and disseminated online.

Things have evolved in the decades since then. Today, most people who talk about Web 3.0 are referring to a more user-centric, decentralized version of the internet.

Web 3.0's Current Status, Benefits, and Disadvantages

New technologies like blockchains and AR have already proven themselves in niche commercial and financial applications. Unfortunately, nobody really knows how – or if – they'll actually make the web-at-large better.

Various examples of Web 3.0 technologies presently exist in the form of decentralized platforms and software that make it easier for individuals to choose how they'll use the internet. The problem is that some of the early enthusiasm for Web 3.0 may be attributable to marketing. After all, what company wouldn't want to promote itself as being at the forefront of innovation?

On the other hand, there's something to be said for hype, especially when it drives publishers and coders to create new solutions for delivering online content. For instance, more companies are exploring ways to support blockchains and other decentralized technologies on their apps and sites, which could make the internet less susceptible to major outages and bad corporate actors. As these novel applications evolve, they could contribute to a more concrete definition of the next generation and reveal whether it's worth the buzz.

How Do Web 2.0 and Web 3.0 differ?

Although Web 2.0 is more open than Web 1.0, most data and content remain centrally controlled. If you aren't one of a few major corporations, then you have to settle for a small sliver of the attention pie.

Consider companies like Twitter, Google, and Meta. These enterprises own all of the content they host, including public-facing web platforms, such as YouTube and Facebook. If you've ever read the Terms of Service for one of these sites, you'll also know they even own the data users create and store on their servers.

In Web 3.0, decentralization is the name of the game. For instance, sites like microblogging Twitter-alternative Mastodon and YouTube alternative PeerTube are known as federated services. In other words, anyone can host their own instances of these platforms that can connect to each other but which aren't owned by one publisher.

Why Should You Understand Web 3.0 in Terms of Web Design?

Just as the jump from Web 1.0 to Web 2.0 was a huge transition, Web 3.0 will probably represent a massive change in how digital content works. For instance, hidden semantic metadata is likely to become an even more essential element of delivering connected user experiences. With big-name publishers shifting towards immersive metaverse and VR experiences, designers will need to think about sharing their messages and content in ways that appeal to users whose expectations are much higher.

Fortunately, you don't have to become a blockchain or cryptocurrency expert to keep up. Choosing an effective web design tool can make it easier to publish and manage content even as content delivery evolves. To learn more about refining your design workflow with an eye on Web 3.0 compatibility and user-centric collaboration, check out SiteManager today.